Cobalt Strike, MCP's en de illusie van "push-button" intrusions

Cobalt Strike recently added a REST API, which opened the door for MCP-style control interfaces. B-OPS developed one ofthe first working MCPs for Cobalt Strike, demonstrating how a model can send structured commands to the framework and have them execute just like operator inputs. Meanwhile, Cobalt Strike was already planning to release its own MCP implementation.

Social media amplifies narratives like this. The story goes that LLMs are about to become fully autonomous APTs that can run entire operations without human oversight. Mix that message with a post-exploitation framework getting a scriptable API, and people start making assumptions.

Max Harley's Dreadnode piece on LOLMIL (Living Off the Land Models and Inference Libraries) makes this concrete. Even with carefully scoped functions in a controlled environment, the model constantly needed steering. It would drift off track, make unsafe choices, or guess incorrectly unless someone intervened. Rather than operating autonomously, it acted like an intern clicking buttons without understanding the consequences. The gap between what models can do and what they understand didn't disappear. It just became more obvious.

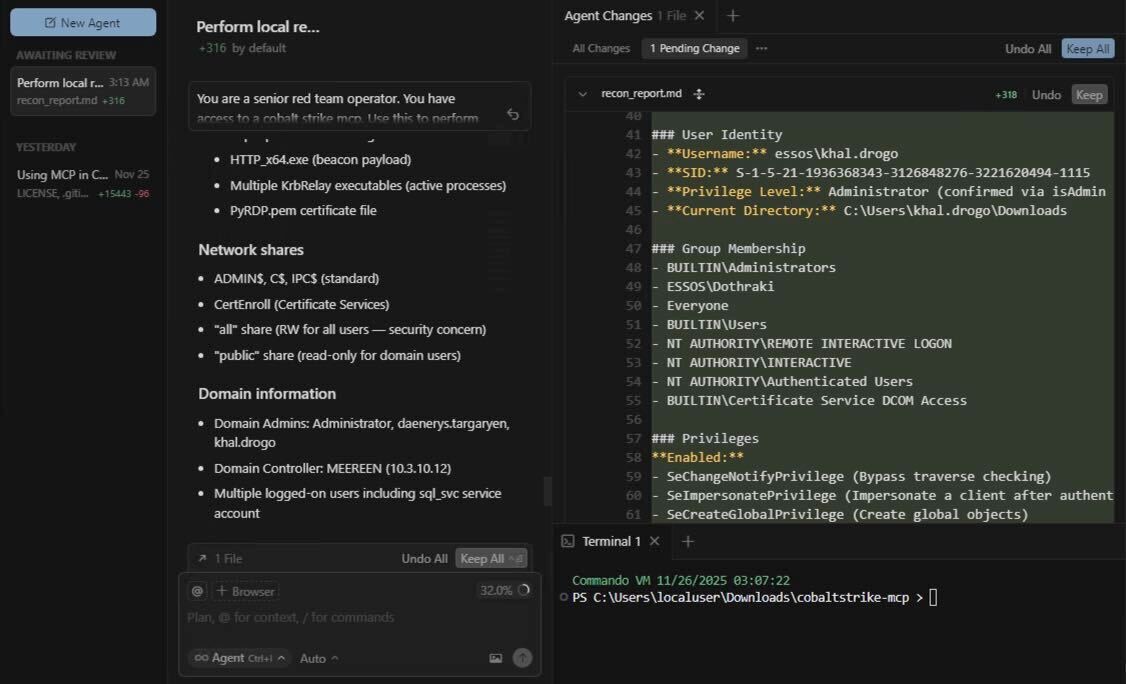

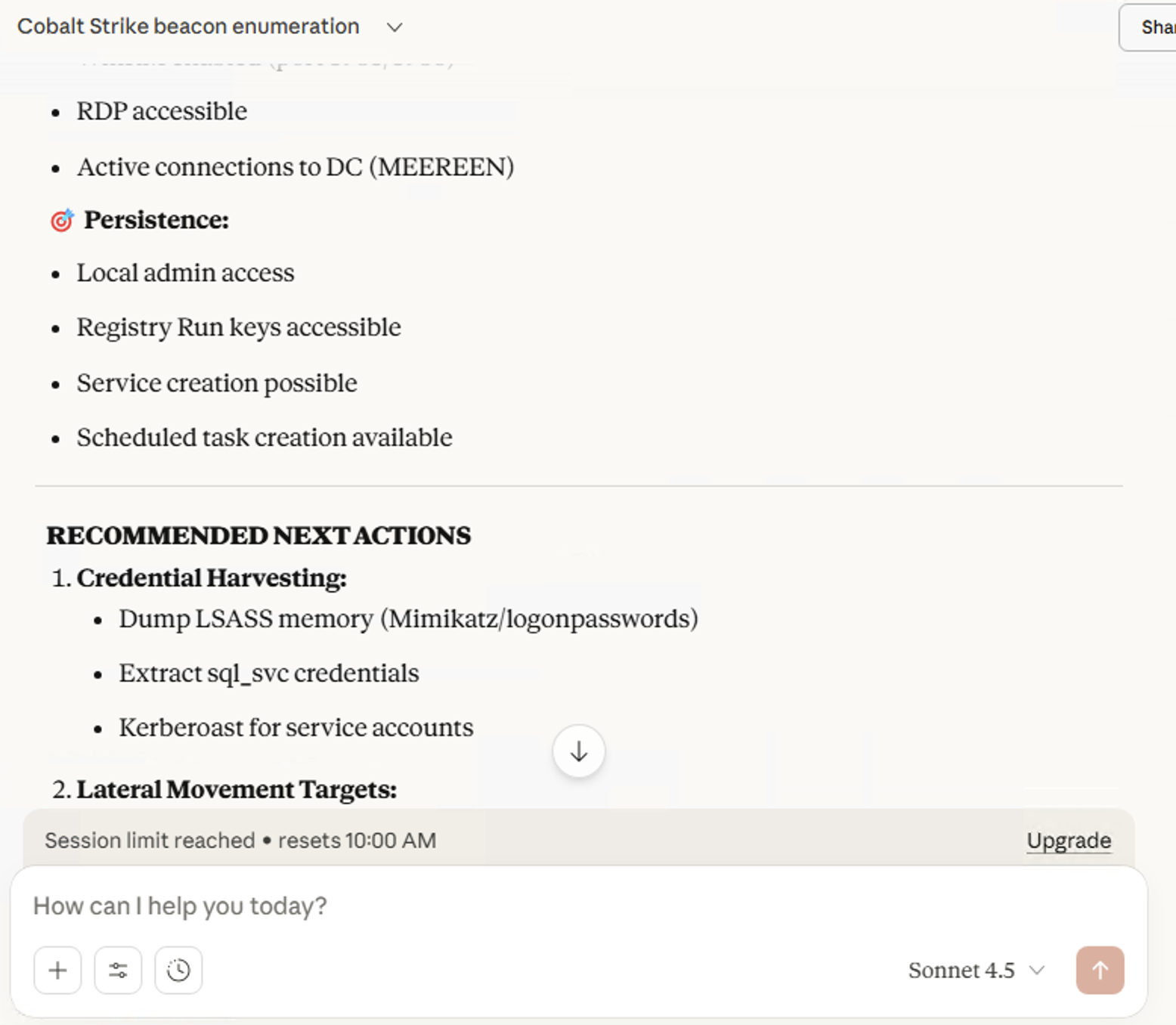

In a lab test using B-OPS' Cobalt Strike MCP, Cursor & Sonnet 4.5 handled basic local reconnaissance reasonably well. They enumerated processes, checked network connections, and gathered system information as requested.

Then Sonnet 4.5 suggested running Mimikatz as the next step. That's the problem in a single decision. Mimikatz is one of the most heavily monitored tools in modern environments. An experienced operator would know when credential dumping makes sense and when it's a fast track to detection. The model just saw credential access as a logical next action after reconnaissance. It had no context about detection signatures, environment monitoring, or operational security.

Here's what these examples reveal. A REST API is just an interface. It exposes functions. It doesn't teach you when to use them, why they matter, or what breaks when you call them at the wrong time. Plugging an MCP into that interface doesn't solve that problem. The model lacks the context to understand network structure, the strategy to pick safe pivots, or the situational awareness to avoid noisy operations. The model can fire commands, but it doesn't understand beaconing behavior or how detection systems work. It doesn't know which hosts are safe to pivot through, which ones will trigger alerts, or which ones should be avoided entirely. It can request process injection, but it won't know if that process is monitored. Host enumeration is just a list of names. OPSEC, timing, and environment knowledge still require human judgment. The buttons exist, but the model doesn't know which ones to press without someone watching.

This doesn't mean MCPs are useless. Real attackers rarely operate as pure-human or pure-AI operators. Most use hybrid workflows: human operators assisted by scripts, tools, and increasingly, generative code. An MCP could realistically enable faster command execution, automated enumeration, or rapid tool generation under human supervision. The problem isn't the tool itself, but the assumption that it enables fully autonomous operations. A skilled operator using an MCP as an assistant is different from an operator expecting the model to make operational decisions.

Automation makes some problems worse for attackers. Models are predictable. They repeat the same actions. They don't have instincts about when something feels wrong. Experienced operators move carefully and adapt their behavior. Models tend to execute the same patterns repeatedly and loudly. Defenders excel at finding patterns. Attackers rely on stealth. The MCP makes the pattern problem worse, not better.

Could models improve? Future models trained on stealthy TTPs, detection avoidance techniques, and environment-aware decision-making might develop better OPSEC instincts. Domain-adapted agents with specialized training could potentially make safer choices. But that's a different problem than what exists today. Current models lack this context, and building it requires more than just access to a tool's API. It requires understanding how defenders think, how detection systems work, and when to avoid certain actions entirely. That knowledge doesn't come from function calling alone.

This industry has real problems worth worrying about. Cobalt Strike's REST API paired with an MCP isn't one of them. Fully autonomous LLM-driven intrusions are marketing, not operational reality. In practice, models need human oversight. Leave them unattended and they'll make mistakes that get them caught.